Check Synchronous Relay Working Principle:

Check Synchronous Relay is used to protect the generator from mismatched synchronization. Mismatched synchronous leads to flow heavy circulating current in the generator windings. Before talking about SKE relay what is synchronization? Synchronization means matching two generator or one generator with grid make them to share their load. In this case, we cannot close the generator circuit breaker as like normally we do. Check synchronous relay is also called as SKE relay.

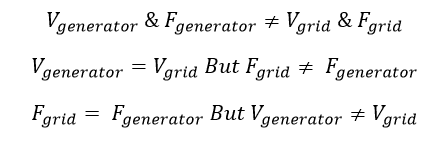

To do synchronization it should satisfy three most powerful conditions

- Voltage of the two generator should be equal. For single generator the voltage of the generator is equal to the grid voltage.

- Frequency of the same should be equal.

- Phase sequence should be same. i.e the phase sequence of the one generator R, Y, B means the other generator or grid should be same R, Y, B. Typically the both power system should have 120 deg phase difference each other.

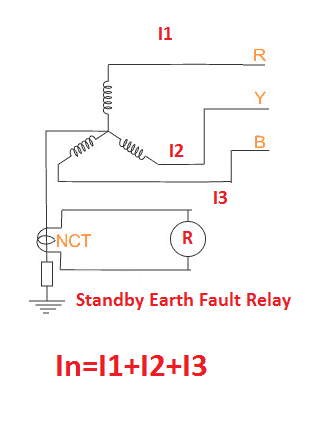

The check synchronous relay checks the voltage and frequency of the both generator end and grid end.

Check Synchronous Relay Working Principle:

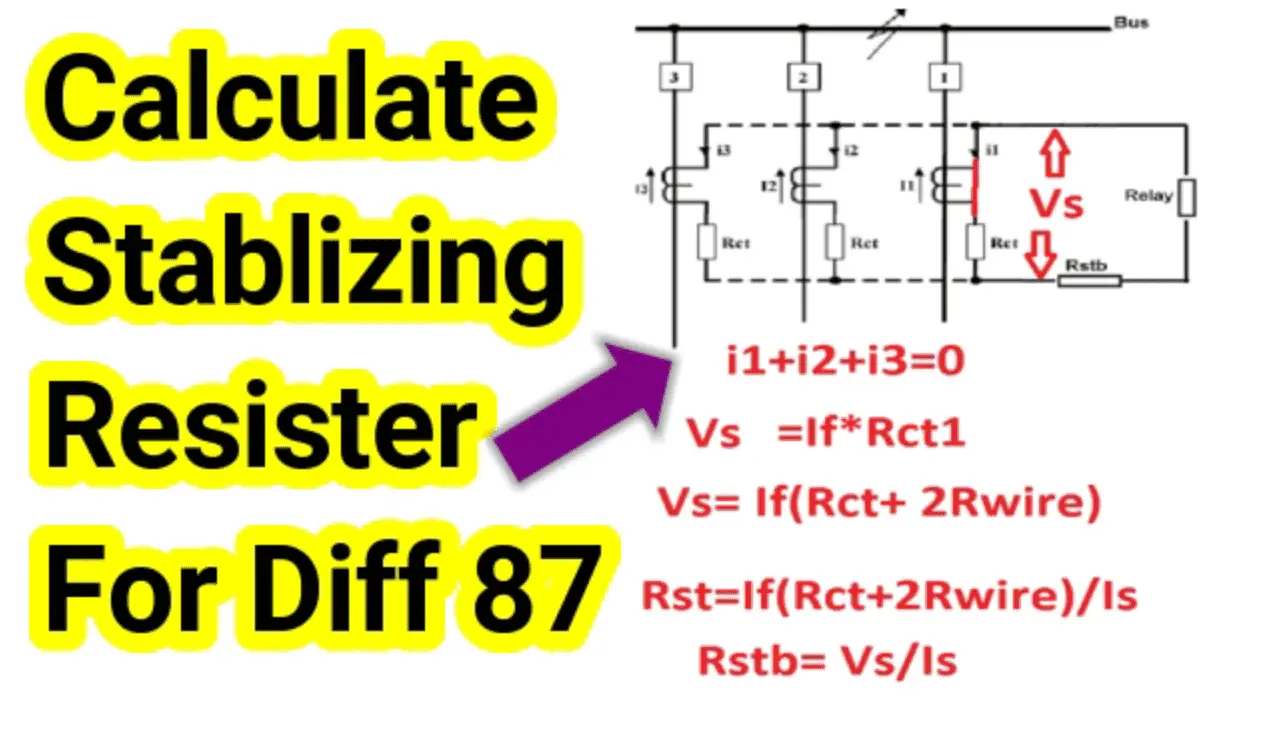

In electromagnetic check synchronous relay, the operating Torque is directly proportional to the voltage and frequency. Hence the grid side voltage from the two potential transformer will be taken, at the same time another voltage reference will be taken from opposite side of the generator (synchronous to be done) Under Normal condition when the voltage or frequency across the two end is not equal. Therefore, the operating torque and restraining torque is not equal, the unbalance blocks the generator breaker closing command.

If the voltage and frequency of both source is equal, then the operating torque and restraining torque is equal, hence the relay release the breaker contact. Then both the power source will be synchronized. The relay continuously blocks the closing command for below mentioned three condition.

[wp_ad_camp_2]

Check Synchronous Relay Setting:

This relay contains the allowable percentage of mismatch of voltage and frequency. I have used the check synchronous relay with the setting of 1.5% mismatch. It means, we can do still synchronization with the 1.5 % voltage or frequency mismatch.

Example: Consider you have a generator rated voltage of 11000 Volts and frequency of 50 Hz. And the grid voltage is 11000 V and 50 Hz frequency. For synchronization, the relay allows up to 165 Volts difference.

Note: Always Keep high voltage at generator end than grid end.

Check Synchronous Relay ANSI Code: 25

![What is Arc Chute? Types, Working Principle [Video Included] arc chute working priciple](https://www.electrical4u.net/wp-content/uploads/2020/06/arc-chute-218x150.png)